The model learns your stroke.

Two golfers miss the same putt differently. The model adapts — and the more you play, the more it knows about you.

Not a future feature.

Already in the app.

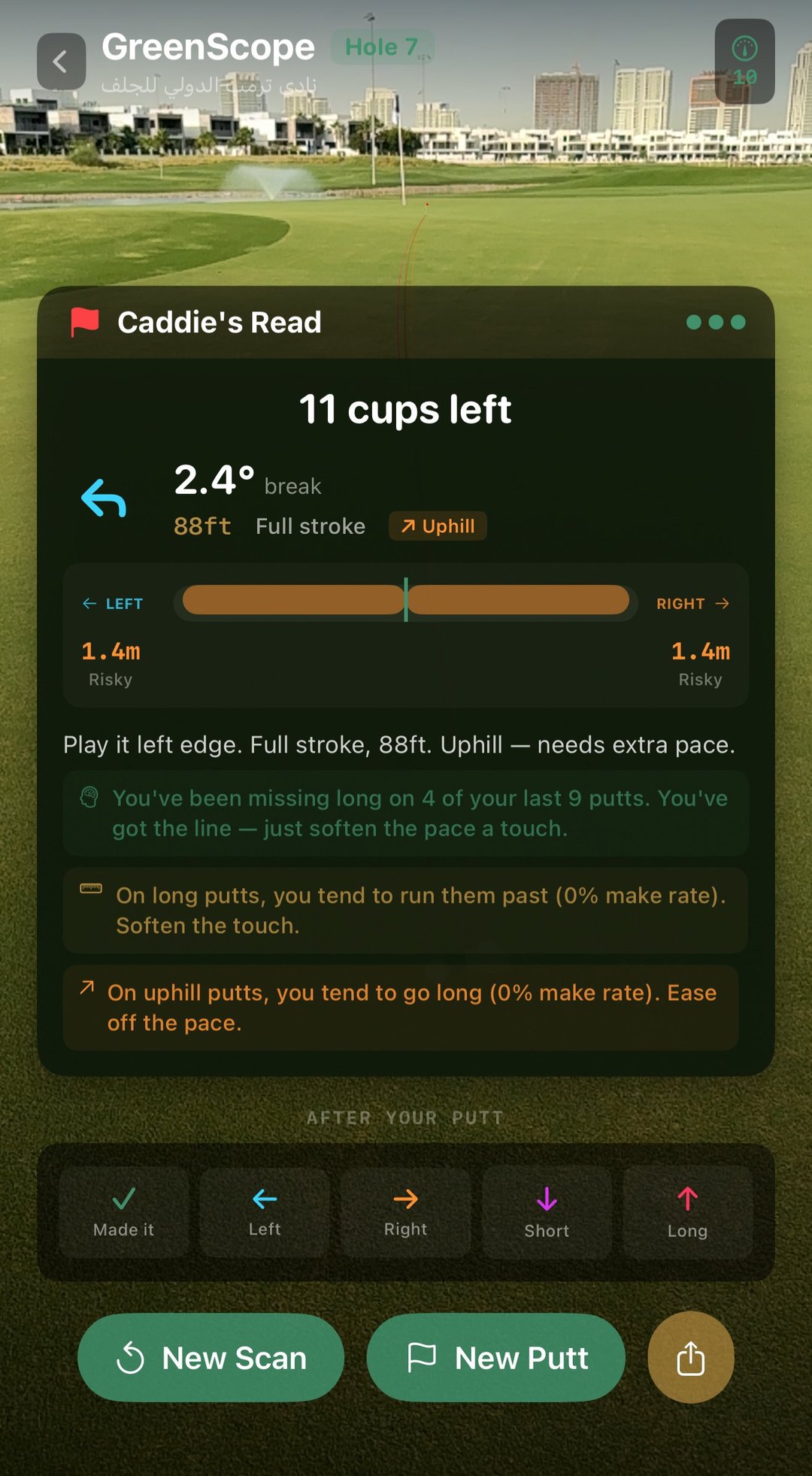

Hole 7 at Trump International Dubai. Two and a half degrees of break, eighty-eight feet, uphill — and three callouts the model wrote after watching the last ten putts of the round.

Different golfer, different callouts. Happening on real greens, today.